The Leak Didn't Reveal a Secret: The Harness IS the Product

512,000 lines of code leaked. Not one of them was the model.

On March 31, Anthropic accidentally published the full source code of Claude Code — the Ai coding tool I use every day. A debugging file left in a public package. Within hours, the entire codebase was mirrored, forked, and dissected by thousands of developers.

Twenty-two million views on X. Sixty thousand copies in twenty-four hours.

Everyone assumed they were seeing the secret engine — the thing that makes Claude Code special. The hidden sauce. The proprietary magic.

They weren't.

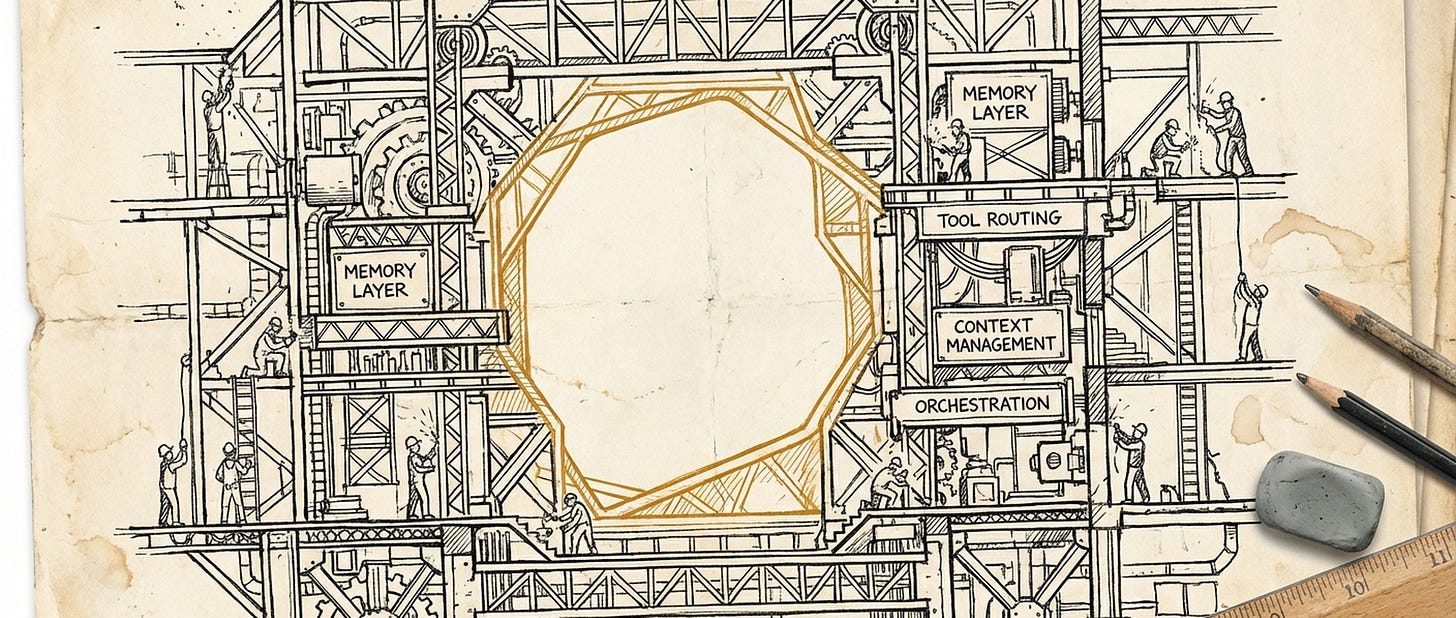

What leaked was orchestration. Memory systems. Tool management. Prompt caching strategies. Subagent coordination. Five different methods for compacting context so the model doesn't forget what matters.

Not one line of model training code. Because the model isn't in there.

The model — Claude, the large language model — lives on Anthropic's servers. What leaked was everything around it. The layer that decides what the model sees, when it sees it, how it acts on it, and what it remembers afterward.

Practitioners have a name for this layer: the harness.

I'd been reading about harness design for months — articles, research, other practitioners describing the patterns they'd found. And I'd been building one myself, without quite seeing it as a single thing. Prompts here. Memory files there. Workflows. Tools. Commands that chain into other commands.

I called it "my setup." Turns out it was architecture.

When I read about the leaked code, I didn't learn a new approach. I recognized the shape of something I'd been building toward — and saw how much deeper it goes. My setup is a sketch. Anthropic's is an engineering project. Same patterns, different scale. A three-layer memory system. Sixty-six tools partitioned by whether they can run in parallel. Sub-agents that share prompt caches so parallelism comes almost for free.

Even a study published the same week — from Stanford and MIT — put numbers to it. Same model, two different harnesses: one scored 74.7%, the other 76.4%. The difference wasn't the model. It was what surrounded it — what context the model saw, how tasks were decomposed, when results were discarded or kept. Change nothing in the model. Change the harness. The results change. It works.

Sixty thousand people cloned the code overnight. Not model weights — harness code. A developer in South Korea rewrote the entire thing in Python before sunrise. Another started a Rust version. One prominent AI commentator's first instinct: "plug it into a system that can self-improve the harness."

They weren't stealing a secret. They were recognizing something they'd been trying to build.

That's the part nobody's saying clearly enough: the demand was already there. Practitioners everywhere — building prompts, chaining tools, designing memory systems — were doing harness work. The leak didn't create the insight. It confirmed it at scale.

If the harness is the product, and the harness architecture is now public — cloned, rewritten, studied by every competitor and every open-source project — what's the actual competitive advantage? An uncomfortable question.

The design space appears to be narrowing. Claude Code, Cursor, OpenAI's Codex, the open-source alternatives — they're converging on similar patterns. Memory layers. Subagent orchestration. Context management. Tool ecosystems. Even the Stanford study found that when you optimize harnesses automatically — not the model, just the orchestration — the results improve dramatically. Same engine, better cockpit.

Did the leak reveal a secret — or a convergence?

I don't have a clean answer. Model access still matters — Claude Code is tuned for Claude, and that pairing runs deep. Execution speed matters. Data flywheels matter. But the architecture itself? That's in the open now. And it was heading there anyway.

The leak didn't reveal a secret. It showed that what practitioners were already building is the product, not the scaffolding. And sixty thousand people, cloning code in the middle of the night, said the same thing without using any words at all.

What I do know is this: the thing I'd been building — prompts, memory, workflows, the orchestration layer around the model — that's the thing that matters. Not which model I pick. Not the next benchmark. The harness.

Catch you next time.

— Ambròs

Co-created with AI. The judgment is mine.